Economists helped the U.S. government earn about seven billion dollars in its first big spectrum auction—without raising a single tax. In today’s episode, we step into tense boardrooms, online ad wars, and even biology labs to see how game theory quietly calls the shots.

John Nash proved a result in just 27 lines that now shapes everything from Google’s ad system to diplomatic standoffs between nuclear powers. Today, we zoom in on a simple but unsettling question: if everyone involved is “rational,” why do smart people and smart organizations still walk straight into bad outcomes?

Think of two rival CEOs deciding whether to slash prices, two political parties choosing how hard to attack, or two countries weighing whether to arm or de‑escalate. Each side’s best move depends on what they *expect* the other to do—and those expectations can trap them in outcomes none of them actually like.

We’ll explore dominant strategies that feel safe but backfire, Nash equilibria that are stable yet inefficient, and one surprisingly human strategy—“tit‑for‑tat”—that turns out to win in long‑run relationships.

Game theory shines when “doing your best” depends on guessing what others think *you* will do. In real life, that’s messy: managers worry about rivals, voters watch polls, and even online platforms anticipate user reactions. Strategic decisions start to look less like solving a puzzle and more like playing high‑speed chess on a foggy board. Misread one move, and you lock into patterns that are hard to escape—price wars, political gridlock, or endless mistrust. To see how this works, we’ll move from theory into a few concrete, high‑stakes strategic stories.

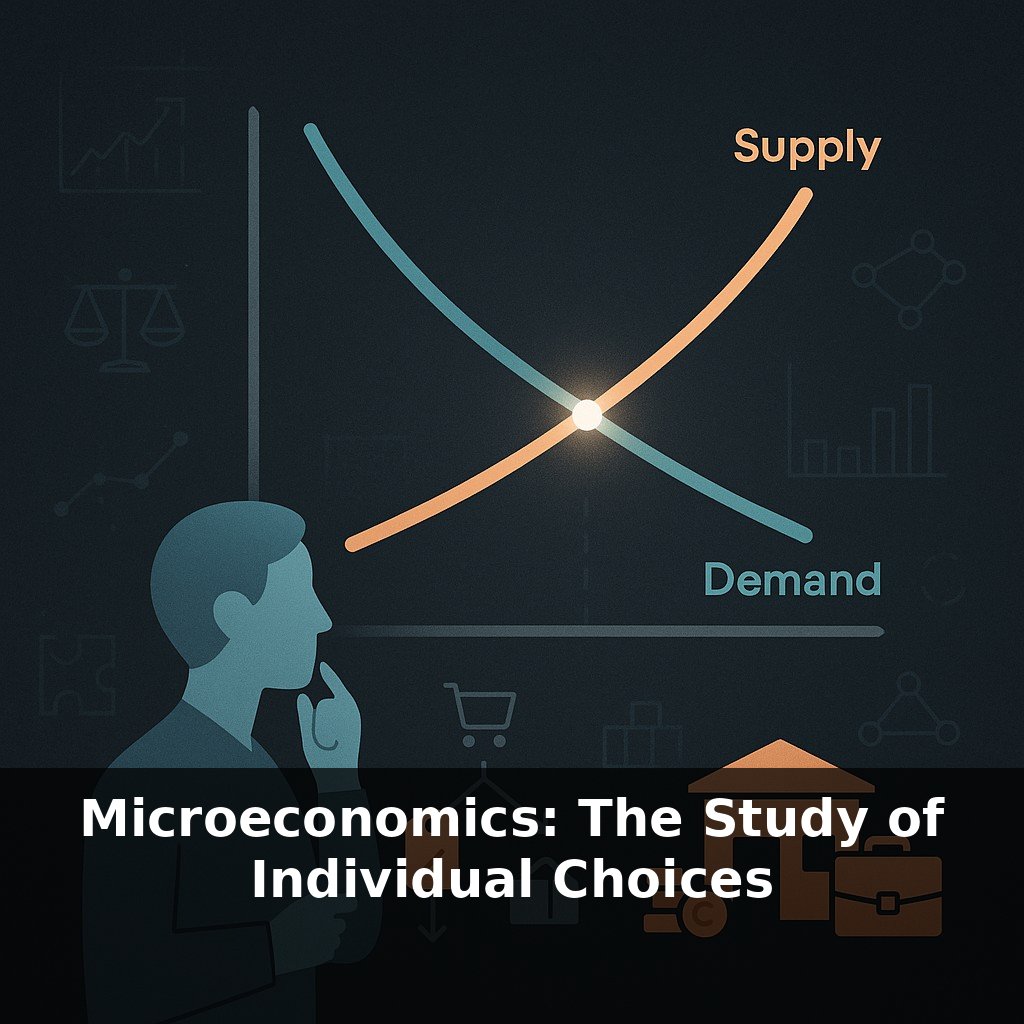

Start with a puzzle that even seasoned negotiators get wrong: two companies are bidding for the same client. If they both “go aggressive” on price, their margins collapse. If they both keep prices high, they earn well. But if *one* cuts while the other holds, the discounter wins big. Each side thinks, “If they stay high, I should cut. If they cut, I must cut too.” They walk in separately—and walk out locked into the worst joint outcome.

This is where game theory stops being abstract math and starts feeling uncomfortably personal. The framework forces you to ask three questions you might normally skip:

1. **What exactly are the payoffs?** Not just profit, but also career risk, reputation, and political pressure. A regulator might prefer a cooperative industry outcome, yet personally gain more attention and power in a crisis. Written payoff tables often reveal why “obvious” win‑wins never materialize.

2. **What can each player credibly commit to?** Talk is cheap; structure is not. A firm that publicly announces a price‑matching policy—or invests in extra capacity—changes rivals’ expectations. In merger talks, agreeing on binding disclosure rules and penalties reshapes the strategic landscape before any offer is made.

3. **What information is hidden?** Most real conflicts are games of incomplete information: you don’t know your rival’s true costs, a voter’s private values, or a negotiator’s fallback options. Here, *signals* matter. A startup taking a small, expensive ad slot on prime TV might be burning cash just to signal strength to competitors and investors.

Think of a coach designing a playbook: the goal isn’t to script every move, but to anticipate how opponents will respond, and to make certain reactions unattractive. In business and politics, that means redesigning contracts, rules, and communication so that the “self‑interested” move lines up better with the collective outcome.

This helps explain why Google’s ad system or modern auctions look so strange from the outside: they’re engineered so that, when each participant simply follows their own incentive, the overall result is far closer to what a planner would have wanted—without anyone needing to be an angel.

A star basketball player deciding whether to pass or shoot is doing quiet game theory. If defenses always double‑team them, passing is better; if defenders hang back, taking the shot dominates. Coaches bake this into set plays: options are designed so that *whatever* the defender does, one teammate ends up with a good look. Strategy here isn’t about genius on every possession; it’s about pre‑building patterns that turn opponents’ best replies into something you can live with.

Strategic design shows up in contracts too. Venture capital term sheets, for example, often give founders extra equity if they hit milestones that are *hard to fake*—like revenue from independent customers, not just “growth at any cost.” That makes the founder’s most self‑interested move (chasing real users) line up with the investor’s goal (building a durable business).

Your challenge this week: pick one recurring interaction—at work, in a group chat, or on a team—and sketch a simple “if they do X, I’ll do Y” map. Then tweak one rule so that, if everyone followed it, you’d all end up better off.

As connected devices negotiate on our behalf, game‑theoretic rules become a kind of hidden operating system for daily life. Your fridge might “bargain” for cheap power at night while your car “votes” for safer traffic patterns, the way routers quietly share internet bandwidth now. But if those rules are tuned badly, algorithms could learn to stall, collude, or punish rivals. The frontier isn’t just smarter strategies; it’s governance that keeps these invisible games fair and resilient.

In the end, strategic thinking is less about outsmarting others and more about redesigning the “rules of the room.” From workplace bonus schemes to how we share online bandwidth, small tweaks in incentives can flip rivalries into coordination. The open question is how widely we’ll apply these tools—only in markets, or in how communities and institutions make decisions too?

Here’s your challenge this week: Pick one recurring interaction in your life that feels a bit “game-like” (negotiating deadlines with your manager, splitting chores with a roommate, or deciding where to go with friends) and model it as a simple payoff matrix with at least two options for you and two for them. Then, deliberately change your strategy for the next three interactions: first play it “selfishly,” then “cooperatively,” then as a “tit-for-tat” responder who mirrors their last move. Track the actual outcomes (who got what, how each of you felt, and whether cooperation increased or broke down), and at the end of the week decide which strategy you’ll commit to using in that situation for the next month.